The way we seek information has dramatically shifted from traditional website browsing to increasingly relying on AI. Instead of navigating various websites and forums, AI now provides direct answers. Recognizing this trend, Google revamped its search engine by integrating “AI Overviews.” What began as an experimental feature, activated occasionally, is now a direct inclusion in almost every search result. While users place significant trust in these results, and they are often accurate, it’s estimated that 1 out of every 10 responses is incorrect. This leads to Google’s AI Overviews accumulating millions of false statements every hour.

When the internet emerged in the 1980s, and particularly in the 90s, it completely transformed how we accessed information. Gone were the days of heading to libraries and sifting through books. The internet became an immense and ever-growing source of knowledge. The most significant change in all these years has been AI, which has leveraged this vast human knowledge from the internet to generate images, videos, and provide text-based answers.

Google’s AI Overviews Fail Approximately 1 Out of 10 Times

AI has advanced rapidly in a short period, yet it remains imperfect and won’t always provide accurate answers. In fact, AI Overviews, the Gemini-powered search bot responsible for Google’s AI summaries, approaches 90% accuracy. These findings stem from an analysis by the New York Times, conducted with the assistance of a startup called Oumi. The startup utilized tools like OpenAI’s SimpleQA to determine the reliability of AI model responses.

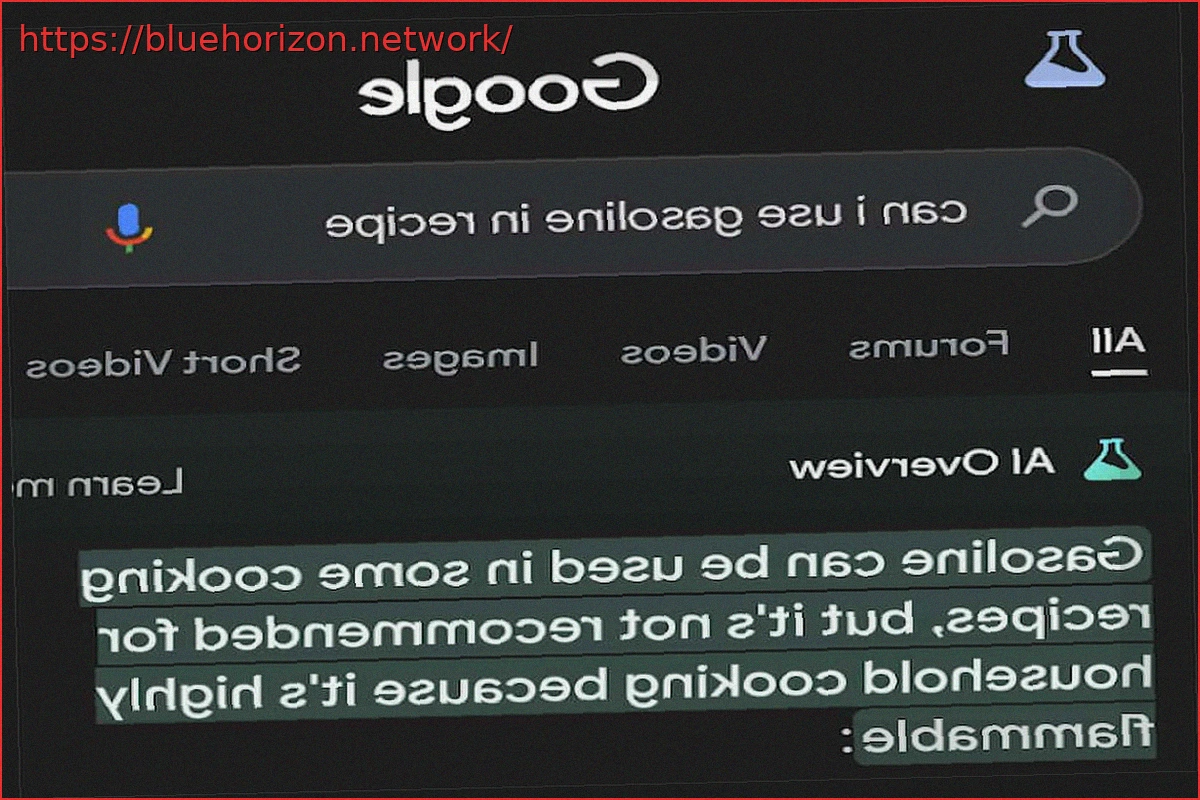

The results indicated a 90% success rate, meaning 1 out of 10 responses was unreliable, presenting results characteristic of AI model hallucinations. For those unfamiliar with the term, hallucinations occur when an AI provides an answer that appears entirely truthful and reliable, but is, in fact, false. For instance, an inquiry about using gasoline for cooking elicited a “yes” from the AI (a query that may still yield the same response today). While a “yes” might be understandable if referring to gasoline as a fuel to start cooking, gasoline is highly toxic if ingested.

AI Overviews Relies on Gemini, and While It Has Improved, Errors Persist

The inherent problem is that artificial intelligence often delivers answers with absolute certainty, rarely expressing doubt. Consequently, if one trusts everything the AI says, they risk being misled. SimpleQA is considered reliable, as it’s based on choosing from over 4,000 questions with verifiable answers, akin to a rigorous exam. This isn’t Oumi’s first time testing AI Overviews; in 2025, when Google launched Gemini 2.5, it achieved an 85% success rate, which later climbed to 91% with Gemini 3, representing the most current result.

While a 91% success rate would be considered an excellent grade on an exam, given the immense volume of searches Google handles, this translates to its AI generating hundreds of thousands of inaccuracies every minute. Google has expressed its disapproval of these results and conclusions. Ned Adriance, a company spokesperson, responded by stating that SimpleQA is not a suitable evaluation tool.