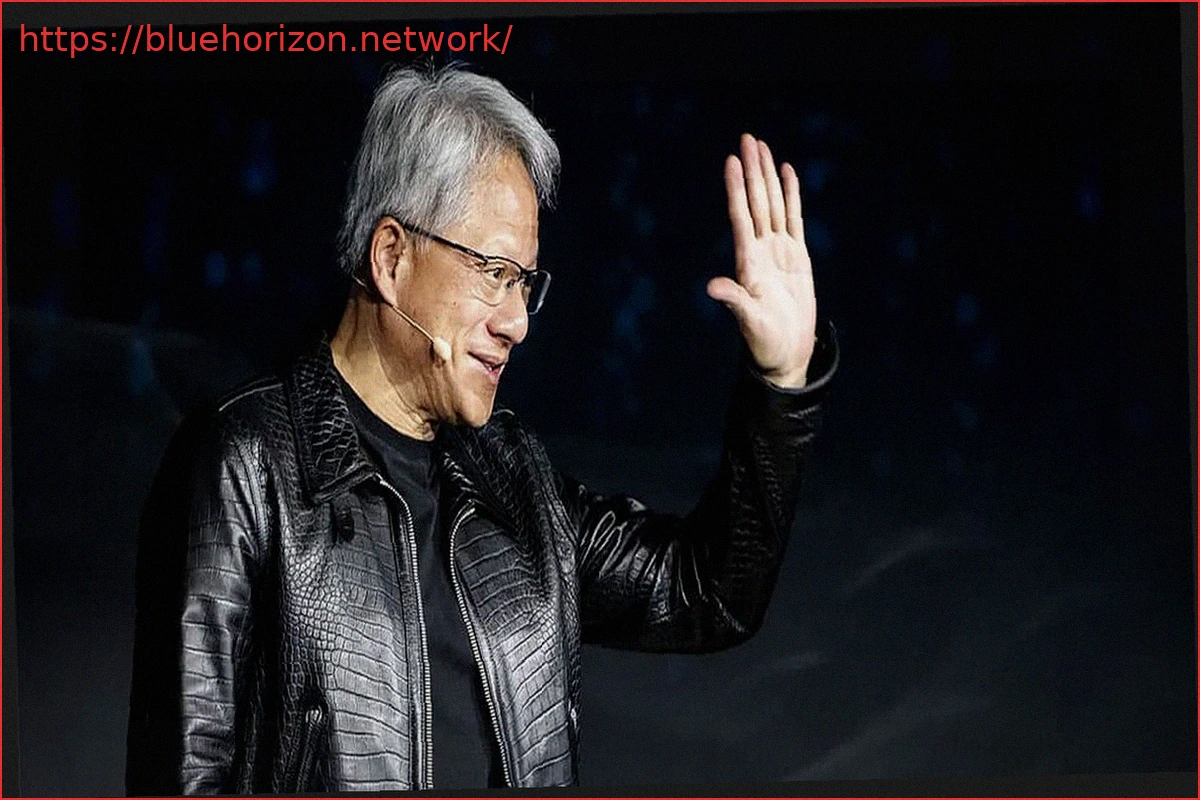

The global memory crisis isn’t impacting everyone equally, especially if your name is Jensen Huang and you lead one of the world’s most valuable companies. While PC, console, and smartphone manufacturers grapple with rising prices and supply shortages, NVIDIA openly acknowledges that this environment works to its advantage, stating that the memory crisis is “very, very good” for the company.

Jensen Huang, NVIDIA’s CEO, explained at the Morgan Stanley Technology, Media & Telecom Conference that limitations in components, energy, and data center capacity are compelling companies to choose only the most efficient hardware. This scenario plays directly into NVIDIA’s hands, as it clearly dominates the artificial intelligence market with its high-performance solutions.

NVIDIA Sees Scarcity as a Strategic Advantage

The scarcity of DRAM memory, and particularly High Bandwidth Memory (HBM), has emerged as a significant bottleneck in AI infrastructure development. The demand for GPUs to train and execute large AI models is growing at a pace that memory manufacturers struggle to match. As we know, Samsung, SK Hynix, and Micron are redirecting a substantial portion of their production towards HBM for data centers, leaving less capacity for conventional memory used in PCs, mobile devices, consoles, and laptops. For Huang, this situation is not a problem but an opportunity.

“I think the fact that everything is scarce is fantastic for us; when it’s scarce, you choose the best,” Huang stated. His logic suggests that building AI data centers involves multi-billion-dollar investments in land, electrical power, cooling systems, and network infrastructure—all of which are limited resources. If the key hardware components are also scarce, operators are unwilling to take risks by investing in solutions that might fall short. Huang put it simply: “If data centers, land, power, and infrastructure are limited, you’re not going to put something random in just to try it out.”

Huang further noted that as AI models grow in complexity and scale, so do their memory requirements, putting even greater pressure on the global supply chain. The resolution to this challenge, he implies, comes through the most straightforward yet expensive approach: “You’re going to put something in that you know for sure is going to deliver the tokens per watt that you need.” This highlights the premium placed on proven, highly efficient performance.

NVIDIA’s Secured Supply Chain and Full AI Infrastructure Offering

Without mincing words, Huang stated that those with significant capital will opt for the best, regardless of cost—and the AI sector currently boasts abundant funding. NVIDIA has also proactively safeguarded its supply by securing long-term agreements across its entire industrial chain. Huang proudly asserted NVIDIA’s assured access to critical components, from memory and advanced packaging (like CoWoS) to interconnections, a level of guarantee many rival companies cannot match. “We have all the memories, we have all the wafers, we have all the CoWoS,” he proclaimed, underscoring their comprehensive control over essential resources.

NVIDIA’s strategy extends beyond selling GPUs; it involves offering complete AI infrastructure systems. This includes accelerators, networking, software, and entire data centers optimized for large-scale models. Huang sent a clear message to competitors: “We are the only company in the world that can come into your company and help you build a complete AI factory.”

In a market where every megawatt of power and every gigabyte of memory is critical, supply restrictions do not hinder NVIDIA, nor does the memory crisis. According to Huang, these challenges transform into a competitive advantage. This perspective is understandable when you are the largest player on the planet. As long as AI demand continues to outpace memory production capacity, NVIDIA’s strategic advantage is likely to grow even further.