At GTC 2026, NVIDIA unveiled a groundbreaking demonstration poised to revolutionize the future of gaming. Unlike enhancements such as DLSS 5, this technology integrates neural networks directly into the game’s rendering pipeline. Its goal is to address two critical issues: the substantial size of textures and the high computational cost of complex materials, leveraging a system known as Neural Texture Compression.

While this technology isn’t entirely new, a quick refresher for context: an MLP refers to a small neural network; BCn is the standard family of texture compression on GPUs; Neural Texture Compression is NVIDIA’s neural texture compression system; and BRDF is the mathematical function describing how light reflects off a surface. With these terms clarified, let’s delve into the details.

NVIDIA Shows Neural Texture Compression Improvements

The key highlight is that NVIDIA demonstrated two specific applications for real-time rendering: Neural Texture Compression and the innovative Neural Materials.

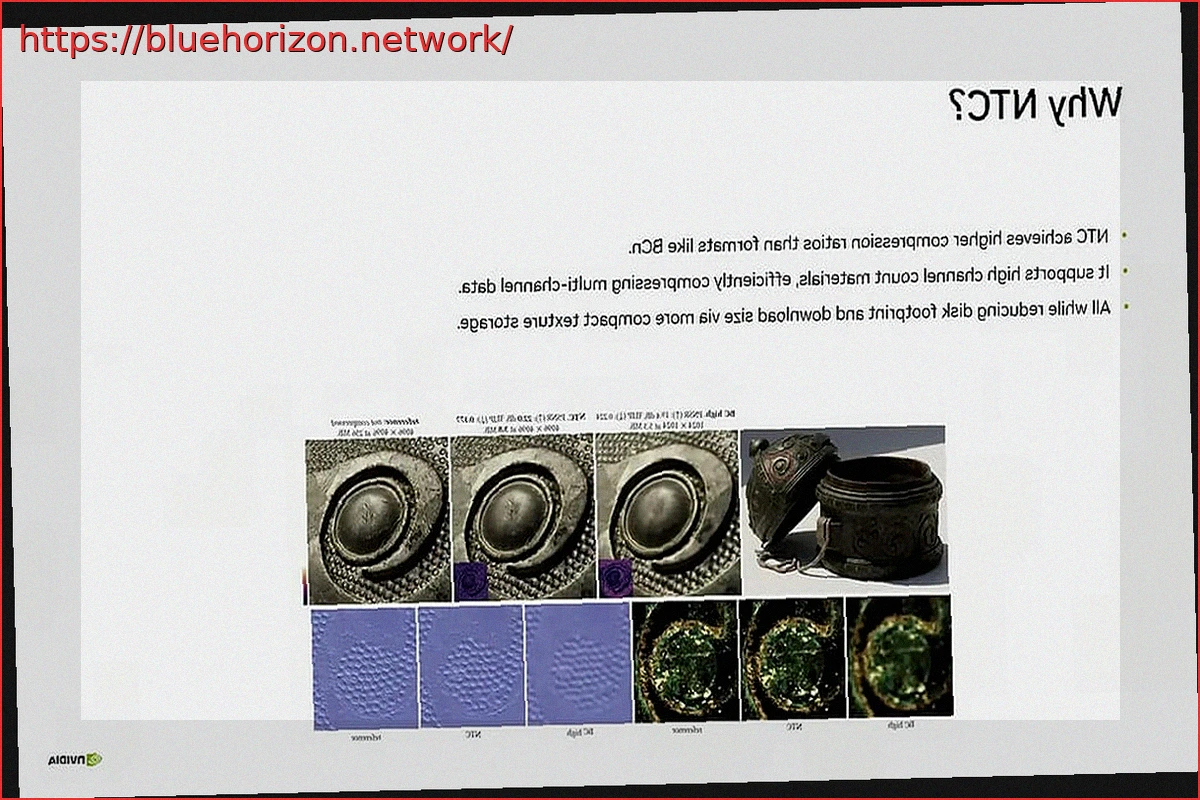

The first segment of the presentation garnered the most attention. NVIDIA showcased a striking comparison: a scene rendered using traditional BCn compression consumed approximately 6.5 GB of VRAM. Remarkably, when Neural Texture Compression was applied, the exact same scene reduced its memory footprint to a mere 970 MB.

This reduction, close to 85%, is astounding, particularly given that textures consume a significant portion of memory in modern games. The core idea is not to store the complete final texture, but rather a compact, latent representation that a small neural network reconstructs in real-time directly on the GPU.

Furthermore, NVIDIA presented another comparison using the same 970 MB memory budget. In this test, BCn compression exhibited noticeable visual artifacts, whereas Neural Texture Compression successfully maintained a higher level of detail and fidelity. The implications for gaming are straightforward: smaller game installations, lighter updates, reduced VRAM pressure, and the potential to implement higher-quality textures without a disproportionate increase in resource consumption.

Neural Materials Put to the Test

The second segment focused on Neural Materials, with equally significant implications. Instead of relying on traditional materials, which accumulate increasingly complex and costly layers, textures, and classical calculations, NVIDIA proposes using a compact neural representation. An example demonstrated how a material with 19 distinct channels was reduced to just 8 channels in its neural version. Impressively, these channels can operate at arbitrary resolutions between 8 and 64 bits.

Regarding performance, the data presented for 1080p, with one sample per pixel and measuring total frame render time in milliseconds, showed a performance improvement between 1.4x and 7.7x. While the range is broad, even the minimum increase is significant, and the upper end dramatically alters the landscape for real-time rendering of complex materials.

A crucial technical clarification for gaming was made: NVIDIA explained that these networks, when evaluating materials or decoding textures, can execute millions of times per frame. Therefore, emphasis is placed on using small, shallow MLPs, integrated directly into Shaders, and optimized to reside on the GPU, leveraging Tensor Cores. This is the foundation of Neural Texture Compression and the management of Neural Materials.

This isn’t merely a flashy AI for a promotional trailer; that aspect was already addressed with DLSS 5 and its 2D Frames. What NVIDIA is now aiming for is to offload some of the demanding classical rendering work to tiny neural networks, intending to reduce memory consumption, bandwidth, and overall complexity. Based on what has been demonstrated, it appears that future games could require less VRAM, or developers will have the option to optimize them better by leveraging these innovative technologies.