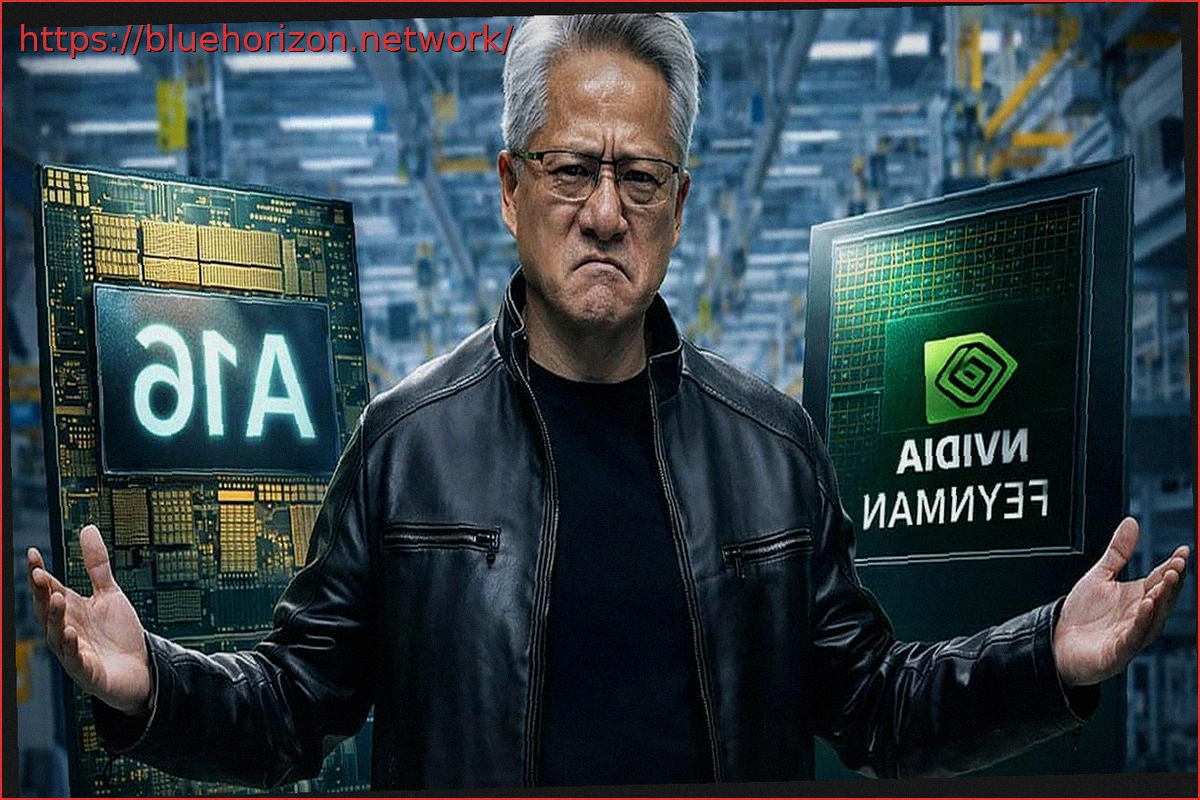

What initially seemed like a remarkable achievement for the world’s largest chip manufacturer now appears to be presenting significant challenges. Reports from Taiwan indicate that TSMC is facing a shortage in its A16 node manufacturing capacity, directly impacting the design of NVIDIA’s forthcoming AI GPUs, specifically the Feynman architecture. This raises questions about the future of these powerful GPUs and their potential derivatives for PC and laptop markets.

Scheduled for release sometime in 2028, NVIDIA will be unable to rely solely on the A16 node. Instead, the design will necessitate a split between A16 and the more established N3P process. This isn’t a typical strategic decision, but rather a direct consequence of capacity limitations within the industry’s most advanced manufacturing node, where even the leading foundry struggles to meet demand.

NVIDIA Forced to Adapt Feynman Design from A16 to N3P

This situation highlights a critical question: if even NVIDIA cannot secure sufficient capacity, what does this imply for the rest of the industry? If these reports are accurate, the margin for other players appears to be virtually nonexistent.

Industry sources suggest that the original plan was for Feynman to be fabricated entirely on TSMC’s A16 node, part of its 2nm family. However, the capacity crunch is forcing a re-evaluation, leading to a hybrid approach. The most critical components of the die are expected to remain on A16, while other blocks will migrate to N3P, a more mature node with greater availability. While hybrid designs have been employed for years, in this instance, the choice is not driven by cost optimization or energy efficiency, but purely by a direct supply issue.

Silence from TSMC and NVIDIA: Too Sensitive to Confirm?

TSMC, adhering to its long-standing policy, refrains from commenting on rumors or specific client matters. NVIDIA has similarly offered no official response. Nevertheless, the underlying context is clear: demand for advanced processes, particularly for high-performance computing (HPC) driven by AI, is overwhelmingly exceeding current projections. The A16 node represents a significant leap from the 2nm generation, specifically engineered for such high-performance products.

According to TSMC’s previous announcements, mass production for A16 is slated to begin in the latter half of 2026. The production ramp-up will be gradual, aiming for approximately 20,000 wafers per month by late 2027 and nearly 40,000 wafers monthly in 2028. When considering the entire 2nm family, TSMC aspires to reach 200,000 wafers per month, an ambitious scale that underscores the pivotal role this node is expected to play in the future of the industry.

The A16 process itself introduces additional complexities compared to what will be seen in the market in mere months. It is estimated to involve a 30% increase in chemical-mechanical polishing (CMP) steps over N2P, alongside heightened demands for planarity. This intense requirement is pushing the entire supply chain, with providers expanding capacity for critical consumables and carrier wafers to keep pace with demand.

That Feynman’s design is undergoing revisions is not an anomaly if the reported A16 manufacturing capacity problem is indeed accurate. Rather, it’s a direct consequence of the current state of the industry. It presents an ironic twist: AI demand is saturating the very manufacturing capacity needed to produce AI chips, compelling designers to adapt their AI-driven designs to alternative lithographic processes. Such is the current landscape.